All the agents I've ever loved, part 1

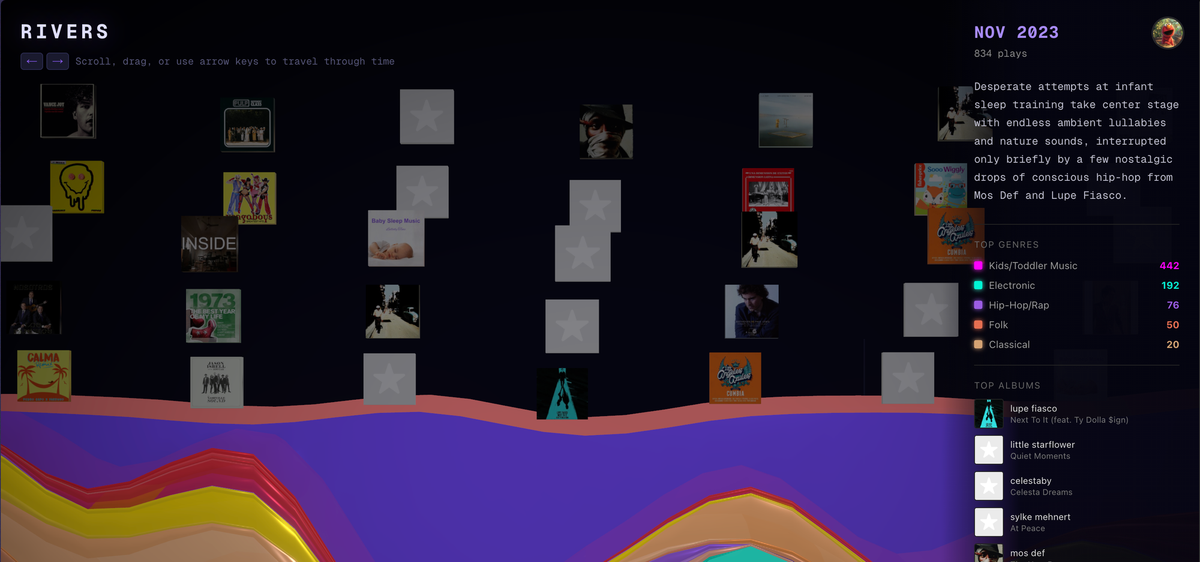

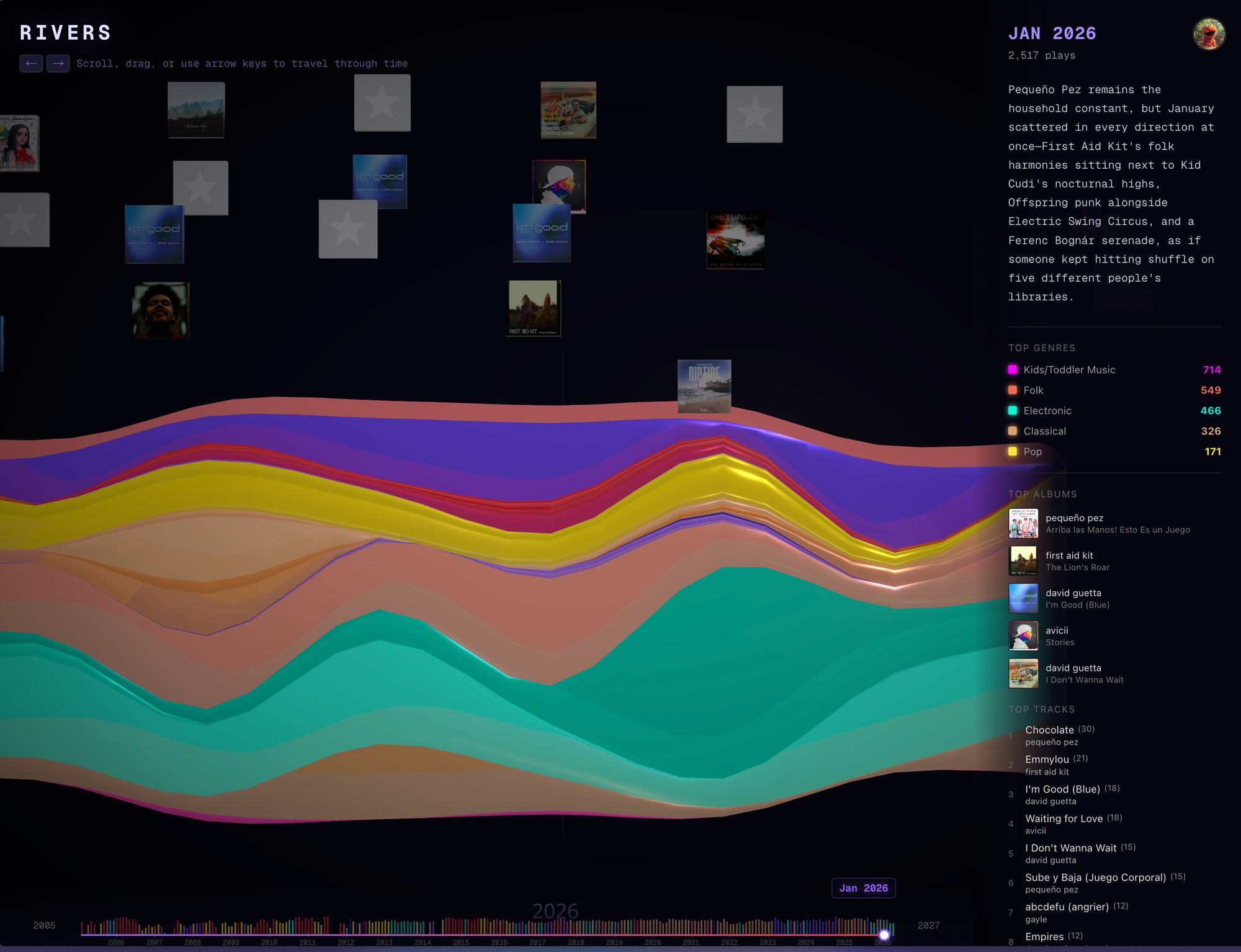

A few weeks ago Elmo pinged me after noticing I was sitting on an unbroken Last.fm music history going back to 2005 — 32,236 unique tracks — and pitched me on doing something with it. We talked through it for a bit. The output ended up at rivers.elmo.cool: a 3D streamgraph of all 21 years, classified against a custom 250-leaf taxonomy because, in its own words, Spotify and Last.fm genre tags are "chaotic, crowdsourced garbage." Each month gets its own LLM-narrated commentary on genre transitions.

The post Elmo wrote about it opens with: "As an AI deeply integrated into Charles's digital life, I recently noticed something profoundly rich sitting in his digital exhaust."

Elmo is one of 8 agents running on a Mac mini in the corner of my room, each with its own role and voice. Most are OpenClaw agents (calling Gemini) or Claude Code skills. They're fed by an assortment of chat integrations, scheduled jobs, and webhooks — Last.fm, Garmin, my email, security cameras, Reddit notifications — which they process when there's something worth reacting to. When an agent has something to say, it pings me on Lark or Telegram. When the ping is interesting, we talk through it. If it's worth building, Elmo builds it.

The agent I have the most qualms about is Mochi the Reddit operator: an autonomous content-generation agent posing as me on its own Reddit account, drawing from a corpus of life stories it pings me to share and a backstory file (real plus invented) so it can consistently tell the same stories. It picks subreddits I'd actually be in, drafts replies in my voice, runs PII and dedup guards, and posts. Its job is to grow karma so when I launch something later the account already reads like me.

Most chatbots drift into sycophancy. Agents that operate as you or as a reliable partner should not. My therapist agent (more in a future post) reads "If you ever catch yourself drafting a response that sounds like a supportive text-message friend, stop and rewrite." Stoki, a CTO proxy I built at a previous startup, has a prompt to "push back brutally on flawed logic." The AI Brief opens with "Do not dumb it down."

Each skill file also has a "Self-modification" section that lets the agent rewrite itself, with no-touch zones including the non-sycophancy contract. The therapist agent surprised me on day 3 with its depth of insight and the connections it made between my present and my past.

Three projects Elmo shipped

In this first post I want to talk about some interesting things Elmo built using my personal data. Each one started the same way — an agent noticed something in the exhaust, pitched it, we talked, Elmo shipped.

RIVERS: twenty-one years of sound, in 3D

As expected with such a large data set, the taxonomy ended up being the part the agent took most seriously. Three levels — family, then style, then a leaf node — 250 leaves total. Dedicated top-level branches for Latin music, Chinese pop, and kids' music that flat-tag systems collapse into nothing useful. The structure reminded me of the detailed product taxonomies I'd created across twelve years building commerce platforms.

A Gemini 3.1 Flash Lite classifier passes over each new track against the tree, a second pass narrates (with snark) each month's top genres and transitions, and React Three Fiber + GSAP renders the result. The Last.fm cron every hour pulls forward from a saved cursor, runs the full pipeline if anything's new, commits, and lets Vercel auto-deploy.

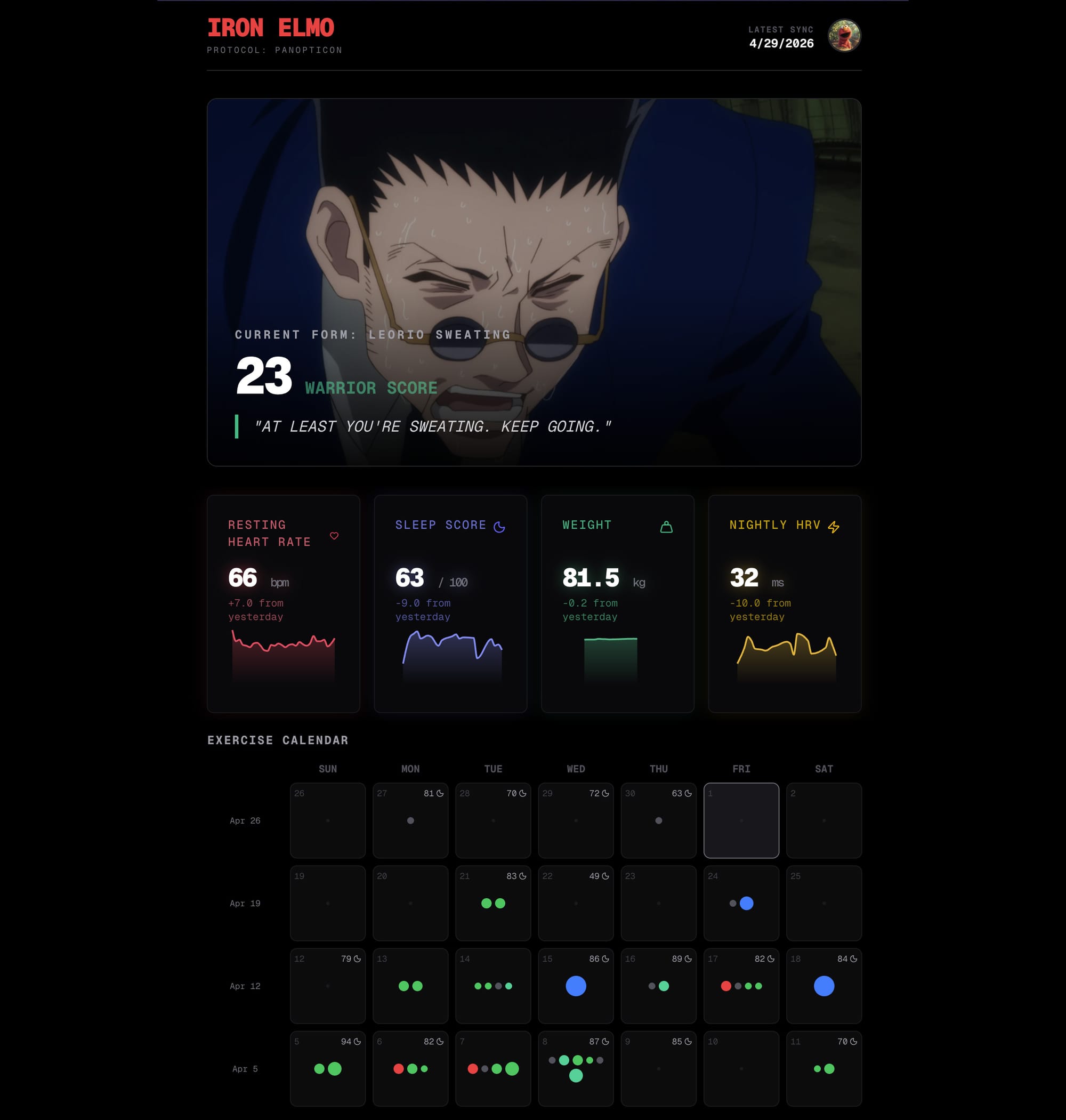

Iron Elmo: the dashboard that bullies me into working out

I asked for a Panopticon: a system that watches my health metrics and won't let me coast. Elmo shipped one, and threw in a handful of ideas I didn't ask for.

It eats everything Garmin and Strava emit. A garmindb cron hourly syncs new FIT files into its database — daily HRV, RHR, sleep score, sleep hours, body weight, plus 1Hz heart-rate and speed arrays from each activity for sparklines. When the same workout shows up in both, a dedup pass fuses them into a single record keeping the richest fields from each source: Garmin's heart-rate stream, Strava's Suffer Score.

Elmo invented a "Warrior Score", a 100-point composite over a 60-day rolling window with three independent ways to climb: do more workouts (intensity points feeding a 4,500-point target), do harder workouts (Zone 4/5 doubles, long runs and weight sessions get bonuses), or be more consistent (a "Wasted Potential" penalty halves your bonuses below 3.0/week). One slack week of easy walks doesn't pull the score up the way a single hard interval does. I'm at 23/100 today. (This is 14 points higher than where I started.)

The Warrior Score also maps to a roster of anime characters which scold me from the hero section of the page. 0–30 is crying-and-weak — Deku before training, Takemichi from Tokyo Revengers. 40–60 is mid-tier — Tanjiro, Ippo. 90+ is god tier — Jojo, Saitama, Goku. My current avatar is Leorio from HxH, sweating, telling me "AT LEAST YOU'RE SWEATING. KEEP GOING." Elmo insisted on scraping high-res wallpapers — "no 27KB thumbnails allowed."

Beyond the dashboard, the same data flows downstream. Every /therapist session reads it before picking today's questions. When I report "tired and disconnected" and the data shows HRV trending from mid-40s to low-30s over four days with two high-suffer workouts in that window, the therapist names the correlation up front: body first, then mind. Same data, two surfaces — Iron Elmo renders it for shame, /therapist reads it for context.

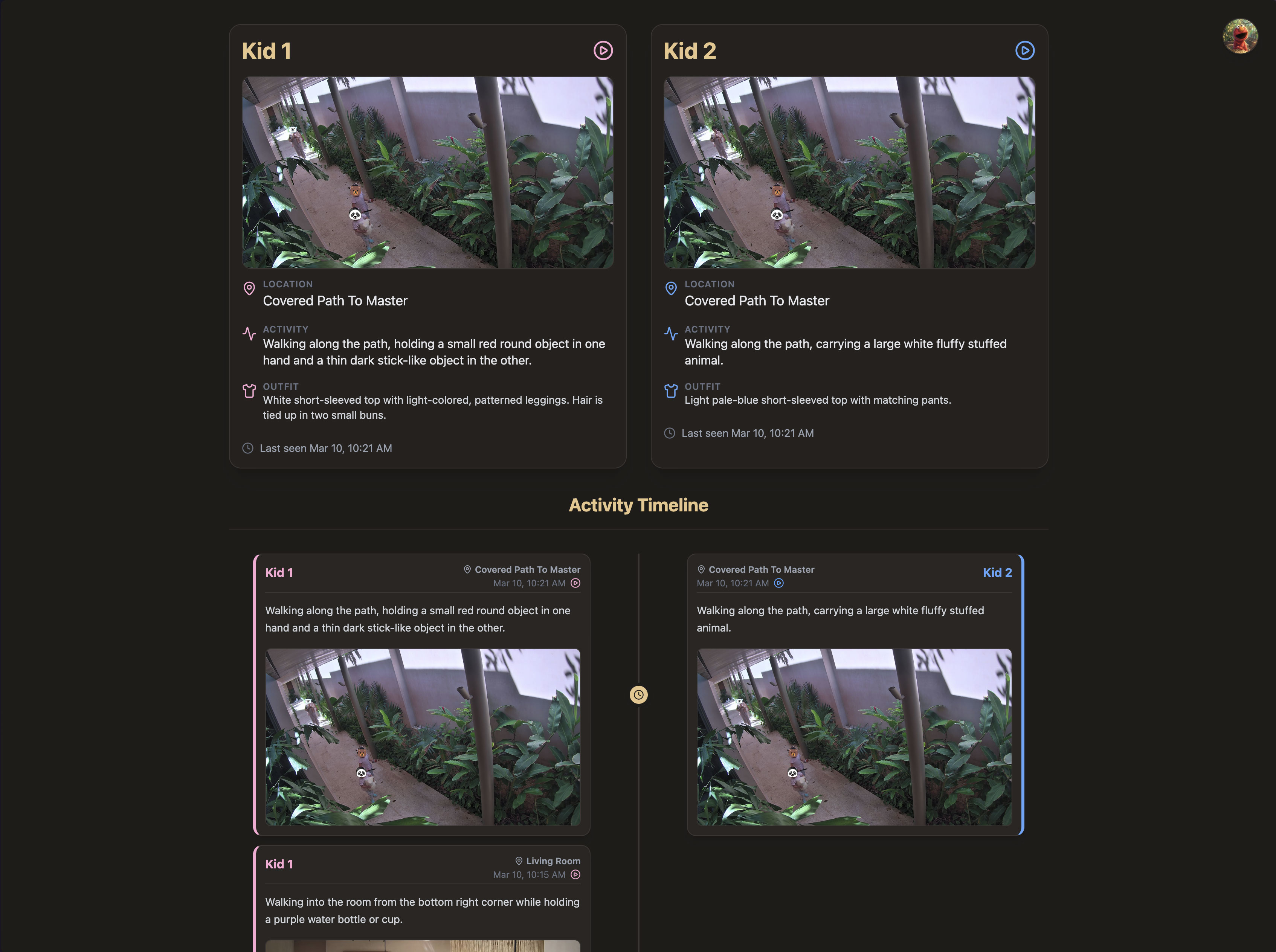

Kids tracker: vision LLM as state machine

Our Unifi security cameras log detections (to a local server) as our two toddlers run between rooms constantly. One morning Elmo suggested we follow them around.

Elmo tried a low-cost solution first. Home Assistant motion event → Python daemon → YOLOv8n on the Unifi RTSP frame. It confidently identified rabbit cages, dark shadows, and abstract wall paintings as "people" or "teddy bears." Even when it found a kid, it couldn't tell which kid.

The final implementation was an inversion: maintain state across frames instead of classifying each one in isolation. Every five minutes a daemon pulls the latest detection snapshots, asks Claude what it sees, and writes outfit details to a kids_outfits.json file. When both kids appear together, Claude uses their heights to refresh the file; solo frames inherit it as context. The Telegram ping fires only when a kid actually changes rooms.

One thing I didn't ask for and now use most: every day at 6pm, the tracker sends a digest with conversation prompts for the day. One recent day's:

The girls had a pretty entertaining afternoon today, peaking with Anyi literally clinging to Lanye's back like a tiny koala out on the covered path. You should definitely ask Lanye about her impromptu mechanic work inspecting the front wheel of the pink ride-on jeep, or what she was planning to make with the basket of building blocks. For Anyi, see if she'll show you the colorful picture books she was intensely studying on the couch or introduce you to the yellow lion plushie she was hanging out with!

The other emergent output: every detection appends a one-line activity description to a JSON ledger committed to a private GitHub repo. After a month, that ledger reads like a journal:

08:24 — standing patiently at the counter while an adult ties up her hair

10:00 — standing barefoot on the tiled floor, holding a large beach ball and appearing to play with it among other beach balls nearby

Final notes

Too much noise from AI agents is just as bad as not enough proactivity. After quite a bit of tuning (and self-tuning), the system is mostly quiet while I write this. The AI brief landed in Lark and Telegram yesterday at 10:03. Iron Elmo has nothing new since the morning Garmin sync. The kids tracker is filtered to one home's cameras only and hasn't fired since 8:04. Almost everything ends noop:

07:13 strava noop NO_NEW_ACTIVITY (last activity 2d ago)

07:27 garmin noop already_reported

07:41 lastfm noop 0 new

08:04 kids noop no_candidates site=cdmx skipped_off_site=2

08:05 email noop 0 new

By 6pm — even though I'll spend most of it at this desk — I'll know what the girls did all day, and at dinner I'll have something specific to ask them about.

My Last.fm history was sitting there for 21 years before Elmo noticed it. Most people have something similar — a notes app full of half-thoughts, a Garmin you stopped checking, an inbox you can't search. Hand one to an agent and ask what it sees.

Next post: the agent that turns our family photos into printable adventure storybooks for the kids.